I am now working on a transcriptome data set for a phylogenomic project. Building trees from huge multilocus data obtained by NGS is now widely practiced, but how reliably we can reconstruct phylogeny with them is still largely unknown.

In my data set, most loci are very little informative because sequences of exons are sometimes extremely conservative (the number of variable sites is often less than 1 in 1000bp). Some loci possibly span thousands of bases and the effect of recombination within locus may not be ignored. In addition, as is often the case with NGS data, they have a quite large number of missing sites and missing loci. I imagine that my transcriptome data boldly violate the common assumptions of phylogenetic inference.

Methods for tree inference also matter. Is concatenation of all loci better than using the multi-species coalescent methods? Do you Infer gene trees first or do all inference simultaneously?

To check the reliability of my phylogenetic inference, I searched recent papers reporting the effects of the model violations and how different methods behave under different conditions and summarized them.

-Recombination

Lanier & Knowles(2012) reported that the effect of recombination is negligible, at least, within the range of recombination rates they tested (ρ=0.1 – 20) and with programs they used. Their simulations showed that sample size and depth of species tree have much stronger effects on the accuracy of the multispecies coalescent methods.

Springer & Gatesy (2016) questioned the use of recombining loci. They suggest that the effective lengths of non-recombining portion of genes are infinitesimally small and it’s illogical to use multispecies coalescent methods to infer phylogeny with the frequently recombining loci.

There are only a few works on the effects of recombination on phylogenetic inference. However, the idea that the recombination is negligible looks plausible for me because recombinations in deep ancestral populations seem to rarely affect the shape of gene trees.

-Low-variation locus

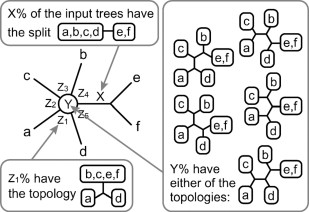

There are opposite views on the usefulness of low-variation loci. A paper by Lanier et al. (2014) said that adding low-variation loci (loci with θ=0.001, equivalent to 1 difference in 1000 bp) did not add accuracy to two-step likelihood inference (that is, gene tree inference followed by species tree inference). On the other hand, a Bayesian method, *BEAST, can gain the accuracy from these marginally informative loci. They concluded that handling gene tree uncertainty is particularly important when you analyse little-informative data sets.

Xi et al. (2015)‘s results are quite opposite. They reported that the two-step inference, RAxML gene trees + MP-EST species tree, can indeed improve the accuracy with a large number of low-variation loci. They suggested it is biased gene trees which compromise accuracy.

I guess that these difference resulted from a slight difference on the methods they used. While Lanier et al. used a majority rule consensus from MrBayes to build gene trees, Xi et al. used a maximum likelihood of RAxML. By taking a consensus tree, the signals of low-variation loci was probably wiped out and the loci became completely uninformative. If they had used the methods like maximum clade credibility tree instead of consensus, results might have been different.

One surprising point is that even small non-randomness in programs can positively mislead inference when you use a very large number of loci.

-Missing data

There has been a long tradition of debates over effects of missing data on phylogenetic inference. So, a plenty of papers consider this issue. A recent thorough evaluation is Xi et al. (2015) (another work of the authors above). Their general conclusion is missing data can reduce the accuracy of tree inference, especially when they concentrate in some species. The detrimental effects of missing data is minimal when missing is “locus-based”, that is, some loci have more missing than others. Similar detrimental effects of “taxa-based” missing were reported by Roure et al. (2013).

This is probably the most problematic issue when handling NGS data. They often have a large variation of read coverages across samples, and consequently, missing data are more abundant in some samples than others. This “biased missing” must lead to decline of accuracy. How this type of missing affect tree shape is yet to be studied.

—

The parameters to be considered and their combinations are huge when we work on large scale multilocus phylogeny. Though there are a large number of papers testing the accuracy of inference under particular violations of models, we can not cover all possibilities. But, I found some important points, especially on the choice of methods and treatment of missing loci/sites.